If you are an APEX Developer who just wants to move to the cloud, there are some things that you should be aware of. Here is my proposal what steps should be done to “cloud enable” APEX developer accounts. This post is only about setting up an account, not about any nifty features to use.

Scenario: We are an admin who already created a new APEX workspace and now wants to give some developers access to that workspace.

The actions were confirmed on Oracle Free Tier cloud featuring a 19c database and APEX 20.2. There is a good chance that some of the obstacles are removed in a newer version. At time of writing it is possible to setup an 21c database on the oracle free tier cloud.

Admin actions

1. create APEX developer account

Inside the workspace create a new APEX account.

Administration/Manage Users and Groups/Create User

Set it to developer. Set and remember the password. You will notice, when switching developer on, the button to “Require Change of Password on First Use” will deactivate.

APEX developer and admin accounts in the cloud are created as database users (in uppercase). This has several major implications.

- The developer can NOT login into the workspace and change the password. For admins it is possible to set a new password using the admin page. Trying to change the password using the normal way of “

change my password” results in a success message, but the change is silently ignored. I assume this is the reason, why the"Require Change of Password on First Use"button is deactivated.

- The password rules of the database apply. On Autonomous Transaction Database (ATP) the accounts use the default profile, which uses the CLOUD_VERIFY_FUNCTION

- Using the same developer name over different workspaces means the same password is used for all. I think this is a welcomed feature, but it certainly is a change to what one is used on other platforms like on premises or on apex.oracle.com.

- If the developer username has special characters in it, for example a dot or an @ as in “firstname.lastname@my.email”, then the database user later always needs to be put in double parenthesis “firstname.lastname@my.email”. Although that works, it prevents certain other options, like rest enabling the account (ORA-20018: Schema or user name must be a simple unquoted literal).

I strongly recommend to only use letters, numbers and underscores for the name (simple literals). No dots, no @, no blanks or any other special chars. For specific rules see the docs:

Nonquoted identifiers must begin with an alphabetic character from your database character set. …

From https://docs.oracle.com/en/database/oracle/oracle-database/19/sqlrf/Database-Object-Names-and-Qualifiers.html#GUID-75337742-67FD-4EC0-985F-741C93D918DA

Nonquoted identifiers can only contain alphanumeric characters from your database character set and the underscore (_), dollar sign ($), and pound sign (#). … Oracle strongly discourages you from using $ and # in nonquoted identifiers.

- The option to create multi users via one dialog does not work properly for developer accounts. Do not use this dialog! (It is possible to use it for end users.)

Side note: End users are not created as a database users. This means we can change the end user password using the normal APEX logic. And the other implications do not apply for them as well.

2. enable the developer to connect with SQL Developer

enable connect

Per default the created db user can not create a session. We need to give him that, so he/she can connect and change the password.

grant create session to <developer>;

proxy connect

A developer usually needs full access to the application schema (with the tables in it) he/she is working with. To avoid distributing the application schema password one can setup a proxy connection. This means the developer authenticates him/herself using the individual password, not the password of the application schema.

alter user <application_schema> grant connect through <developer>;

There are additional options possible like setting an extra password for the proxy connect or allowing only specific roles. For the typical developer scenario those extra settings seem not necessary.

Side note: A proxy connection is what APEX also uses to connect to the schema using only the APEX_PUBLIC_USER.

send wallet

To access the cloud database from a local client a key is needed. Oracle provides this key in a PKCS#12 wallet inside a wallet.zip file. The admin can download this wallet from the database service console. This is a database instance wallet that is only valid for this specific database. There are also regional wallets that are valid for all databases inside a region.

Write down who you sent the zip to. This is important since the admin might want to rotate the wallet. I prefer long intervals for the wallet rotation. But it should be rotated from time to time (for more information see: Rotate Wallets for Autonomous Database ).

Make the developer understand that he/she is not allowed to give the wallet to other users/developers.

Side note: There is an api to handle wallets (https://docs.oracle.com/en-us/iaas/api/#/en/database/20160918/AutonomousDatabaseWallet/UpdateAutonomousDatabaseWallet). Using this api one could automatically rotate the wallet at defined intervals.

Developer actions

3. wallet handling

Store the wallet_INSTANCENAME.zip into some local folder.

In general there is no need to unzip the wallet file. A noticeable exception would be if you want to use edition based redefinition. I wrote about this before:

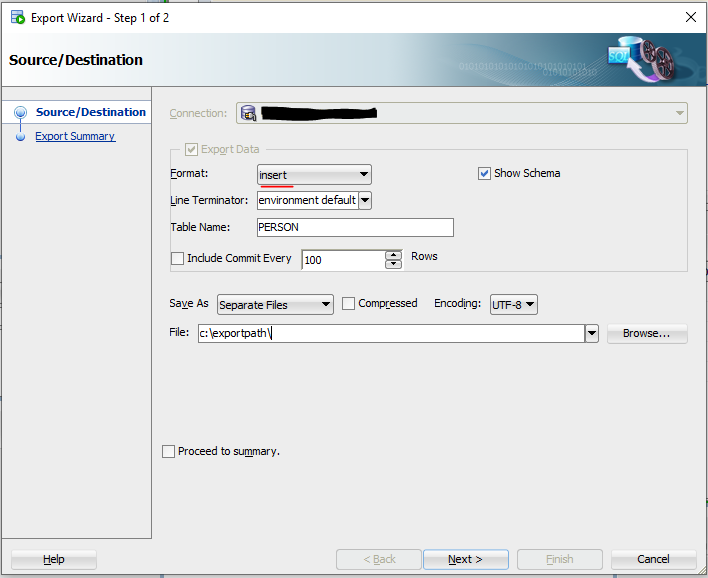

4. setup SQL developer

Two connections are recommended.

One for the developer account itself. This one is needed to easily change the password. Additionally most developers like to have an environment were they can try out stuff. Further privs might be needed for that (create view, etc.).

And one connection for the application schema using a proxy connect.

To connect to the cloud from a local (and recent) SQL Developer installation is simple. Choose “Cloud Wallet” as the connection type and point the developer to the wallet zip file, choose the tns alias (service) and your done. On the free tier you should choose the “xxx_low” alias.

To create a proxy connect the name of the target schema (in my example DEMODB) needs to be configured under the tab “Proxy User”.

I also suggest to add this schema to the name of the connection. I use [schemaname] for that, because that is the syntax for proxy connects in SQL Plus.

SQL> connect "SVEN.WELLER"[DEMODB]/mysecretPwd1111

5. change the password

There are multiple ways to reset a password using SQL Developer. The good thing is, they also work for cloud connects (a recent jdbc driver is needed for way a) ).

a) Right-click on the connection and choose “Reset Password” . This also works for proxy connects and does reset the password for the individual user.

b) Type password in a connected worksheet

This changes the password for the schema. For a normal connection without a proxy this changes the password of the connected user.

In case of a proxy connect it tries to change the password for the PROXY client.

Since the developer will not have this password, he/she will not be able to change it.

c) And it is possible to change the password using the ALTER USER command. Everybody can do this for their own password even without enhanced privileges.

If the username has special chars in it – like in my case the dot – then do not forget to use ” around the name. Regardless of the special chars, the name will be in UPPERCASE. And of cause the password rules apply.

In addition to that alter user command, in the cloud environment, the original password needs to be mentioned too. otherwise the above command will result in the following error message

ORA-28221: REPLACE not specified

*Cause: User is changing password but password verification function is turned on and the original password is not specified and the user does not have the alter user system privilege.

*Action: Supply the original password.

Solution is easy, just add the old password to the alter user command. The complete syntax is

alter user <developerschema>

identified by <new password>

replace <old password>;

Conclusion

In the cloud each APEX developer is a database user. Treat it like one.

Jeff Smith shows a brand new option to manage DB users using SQL Developer web: https://www.thatjeffsmith.com/archive/2021/03/creating-users-granting-storage-quota-using-database-actions/