Problem

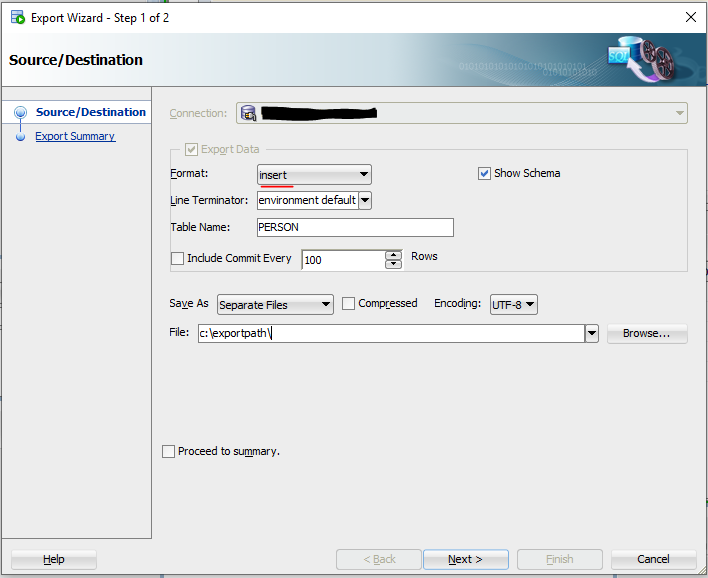

Unfortunately when we export data using the SQL developer export functionality it also exports data for virtual columns. While this is certainly useful for spreadsheet exports, it is rarely sensible for insert statements.

Any insert into a virtual column fails with error ORA-54013.

SQL Error: ORA-54013: INSERT operation disallowed on virtual columns

54013. 0000 – “INSERT operation disallowed on virtual columns”

*Cause: Attempted to insert values into a virtual column

*Action: Re-issue the statment without providing values for a virtual column

One way to avoid this is to set the virtual column to invisible before the export is started. SQL Developer will not export the data from invisible columns. But changing the data model in the source just to get a little more convenience is usually not the way to go. Remember that setting columns to invisible and visible again will put these columns at the end of the logical column list. Which could be a problem if code depends on the column order (which it shouldn’t). Also this requires to export the data again.

If you already have an export file with a lot of inserts, the following dirty little trick might help you.

Temp column trick

We create a new real column, insert the data into this column and throw it away afterwards. The virtual column is temporarily moved out of the way while doing so.

Lets assume we have a table persons and a virtual column that concatenates the columns firstname and lastname into fullname.

create table person (id number,

firstname varchar2(100),

lastname varchar2(100),

fullname as lastname||', '||firstname);

We rename the target column and temporarily add a new column at the end of the table. The insert statements produced by SQL Developer have the column names included in the insert, so they do not depend on column order.

alter table person rename fullname to "_FULLNAME";

alter table person add fullname VARCHAR2(1000);

Then run the insert scripts that were created by SQL Developer.

SET DEFINE OFF;

@PERSON_DATA_TABLE.sql

After the data was inserted, restore the old columns.

alter table person drop column fullname;

alter table person rename "_FULLNAME" to fullname;

Automation

And here are two selects that generate all those statements for a complete schema. The second select needs to run before the generated code from the first select is executed. Otherwise the columns in the dictionary already have been changed.

Preparation Script SQL

select 'alter table "'||table_name||'" rename "'||column_name||'" to "_'||column_name||'";'||chr(10)||

'alter table "'||table_name||'" add "'||column_name||'" '||case when data_type in ('VARCHAR', 'VARCHAR2','CHAR','NCHAR','NVARCHAR2') then 'VARCHAR2(4000 byte)' else data_type end||';'

as pre_insert_ddl

from user_tab_cols

where virtual_column='YES'

--and user_generated='YES'

and hidden_column = 'NO';

Restore Script SQL

select 'alter table "'||table_name||'" drop column "'||column_name||'";'||chr(10)||

'alter table "'||table_name||'" rename "_'||column_name||'" to "'||column_name||'";'

as post_insert_ddl

from user_tab_cols

where virtual_column='YES'

--and user_generated='YES'

and hidden_column = 'NO';

Restrictions and side effects

The scripts are not perfect. For example if you have a column name that is already at maximum identifier length (e.g. 30 or 128 characters long) then adding _ in front of the column will produce an error. I think this situation is very rare and should best handled by manually correcting the scripts.

Varchar2 columns are expected to be equal or less than 4k, which might not be correct when extended_string_size is used in newer DB versions.

Some special datatypes might need extra consideration. In general the datatype of the new column does not matter as long as it doesn’t produce an error during insert.